VMware: vxlan to vxlan traffic randomly fails or only works on the same ESXi host...

Summary:

Here are the basics:

Here are the basics:

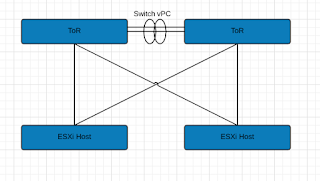

- Leaf/Spine Architecture (Basic illustration only show ToRs)

- vSphere 6.5U1 / vSAN 6.6

- NSX 6.3.3

- Multi-VTEP Deployment w/ LoadBalance-SRCID

- Standard VLAN for VTEP connections.

- 2x Nexus 9K ToRs

- Dell R630's

Long story short, Switch vPC's were stripping VLAN ID info before sending to peer ToR then to ESXi host. ESXi host dumped it causing these strange issues. Load Balance SrcID w/ Multi-VTEP made this especially difficult to figure out because of the basic randomness. Switch vPC link has a configuration advantage, so in order to keep it, we ran additional links between the switches to make some standard trunk connections. Once done, we configured our NSX VTEP VLAN network to traverse those trunk connections rather than the vPC. This resolved our stripping issue.

See past page break for tools and more details on what we (mostly vmware NSX senior support staff) did to figure this out.

[FYI: Cisco recommendations appear to be only to use vPC between switches if the downstream host links utilize port channel (LACP) as well. There are factors in play in the larger scheme of the network fabric, but this is from the viewpoint of a compute engineer.]

See past page break for tools and more details on what we (mostly vmware NSX senior support staff) did to figure this out.

[FYI: Cisco recommendations appear to be only to use vPC between switches if the downstream host links utilize port channel (LACP) as well. There are factors in play in the larger scheme of the network fabric, but this is from the viewpoint of a compute engineer.]

Symptoms:

Two VM's on two different ESXi hosts sharing the same pair of ToR's would communicate sometimes on same vxlan (VNI). When we'd fail an uplink (essentially 1 ToR), all traffic would pass normally and NSX was happy. Unicorns abound, but when the second ToR was introduced back, again, very flaky connections to complete dead.

Troubleshooting:

Let me just say, VMware NSX support is ridiculously good, cause there is no way I would have figured out how to do this crap on my own. It was thanks to their thoroughness that we could point back to physical infrastructure giving us issues.

Useful packet capture cli lines you can run from the ESXi host:

pktcap-uw --uplink vmnic0 --dir 1 --stage 1 -c 250 -o /tmp/source_vmnicx.pcapng --ng

Captures 250 packets (-c 250) of outgoing (--dir 1) traffic from physical vmnic0 (--uplink vmnic0) as it's leaving the physical nic (--stage 1) of the ESXi host and outputs to capture file in tmp (-o) directory in pcapng format (--ng).

pktcap-uw --uplink vmnic0 --dir 0 --stage 0 -c 250 -o /tmp/destination_vmnicx.pcapng --ng

Captures 250 packets (-c 250) of incoming (--dir 0) traffic from physical vmnic0 (--uplink vmnic0) as it's entering the physical nic (--stage 0) of the ESXi host and outputs to capture file in tmp (-o) directory in pcapng format (--ng).

The above commands basically capture packets at the closest point to the physical network. Other options are available closer to hypervisor, vm, etc. See here for all options. To figure out which physical adapters you need capture from, see bottom of page notes.

For my case, this made sense since vmware support had already explored every other facet. What they found was quite interesting. To properly troubleshoot this issue, you need to choose two hosts, and two VM's just to do a simple continuous ping test (assuming NSX DFW if enabled allows that traffic). Then run the appropriate command from your source and destination.

Once captured and found our ICMP packets, we found that VMs working, were traversing the same ToR Switch. The VMs that weren't working were those that were traversing the VPC on the ToR Switches.

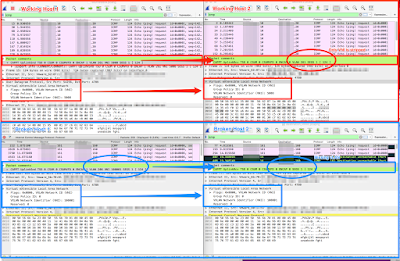

|

| Showing what a vxlan packet should look like leaving and arriving at a host |

Essentially, the VPC was stripping out the VLAN ID when passing it to the peered ToR to get to its destination ESXi host. When the ESXi host received the packet, it had no VLAN ID so basically dumped it even though the VNI (vxlan) information was there.

Notes:

To determine physical adapter or vmk# your VM is leaving and receiving from:

- On Source/Destination Host(s):

- esxcli network vm list

- Record World ID.

- esxcli network vm port list --world-id=#####

- Record DVPort ID, Port ID, Team Uplink (This is likely your physical adapter that you need to capture from)

- esxcli network vswitch dvs vmware vxlan vmknic list --vds-name="nameofyourVDS/DVS"

- Record vmknic names and their associated EndPoint ID

- esxcli network vswitch dvs vmware vxlan network port list --vds-name "nameofyourVDS/DVS" --vxlan-id=#######

- With your VM DV Port ID, you can match up vmknic ID to EndPoint ID to determine the vmk# your vxlan traverses over.

Comments