Azure VMware Solution: NSX-T Active/Active T0 Edges...but

Summary:

Azure VMware Solution (AVS) delivers by default w/ a pair of redundant Large NSX-T Edge VM's each running a T0 in active/active mode. So why is my traffic only going out one Edge VM?

Short answer:

The default T1 that is delivered w/ AVS is an active/passive T1 where you connect your workloads to. So while it could technically take either T0, it's always going to go out the closest T0 to the active "SR" T1. Where do the SR's live? You guessed it, on the Edge VM's. As you can imagine, this can lead to a bottleneck if you try to shove all your traffic through a single Edge VM.

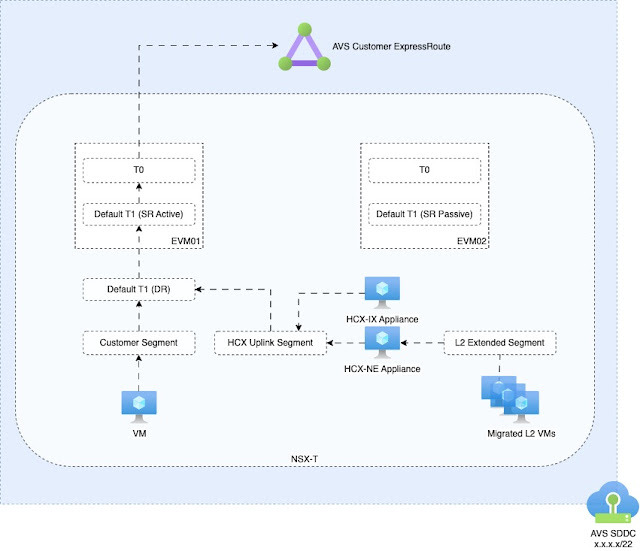

Simple Diagram:

Longer answer with Options:

The return path could come back via any T0, but guess what, the T0 that receives the packet will pass the traffic to the active "SR" T1 to be processed. Meaning by default, a single EVM will process all in and outbound traffic. It wasn't always this way. AVS used to deploy w/ an active/active T1. So what changed? We needed a way for customers to resolve internal DNS addresses for their vCenter/NSX-T, etc. So was the birth of the NSX-T DNS forwarder on the default T1.

This meant the default T1 transformed into an active/passive one. I highlight by default because, you don't have to use the default T1. It can be simply left alone. You can create as many T1's as is supported by NSX-T. It would be recommended to create T1's for your own workloads.

Choices:

You have choices when it comes to T1's. You can deploy an active/active or active/passive T1. The basic difference between the two, is whether you attach and Edge Cluster to the T1 or not. Attaching an Edge Cluster enables that T1 to run services like DHCP, DNS, stateful firewall, etc.

- Active/Active:

- By not attaching the T1 to an Edge Cluster, the T1 only deploys DR's which are active/active by default.

- ✅ Randomly chooses EVM to route through via ECMP.

- ⚠️This is not load based

- ⚠️Persistent type traffics (like HCX IPSec L2 Extension tunnels) will establish randomly and ALWAYS traverse the randomly chosen T0/EVM.

- Good for non-persistent traffic types as ECMP will always calculate randomly which T0/EVM to send traffic out of.

- ❌ CANNOT use active services (NAT/DHCP/DNS/Firewall) on T1 in this setup

By simply not setting an Edge Cluster makes T1 run as DR only.

- Active/Passive:

- Attach an Edge Cluster

- ⚠️ Must semi-manually balance traffic between two EVM's

- ✅ CAN use active services (NAT/DHCP/DNS/Firewall) on T1's in this setup.

- Recommend setting "Preemptive = failback" failover to control which EVM traffic will ALWAYS flow over. This assures traffic will always flow over the EVM chosen as primary, assuming "auto allocated" was not chosen.

|

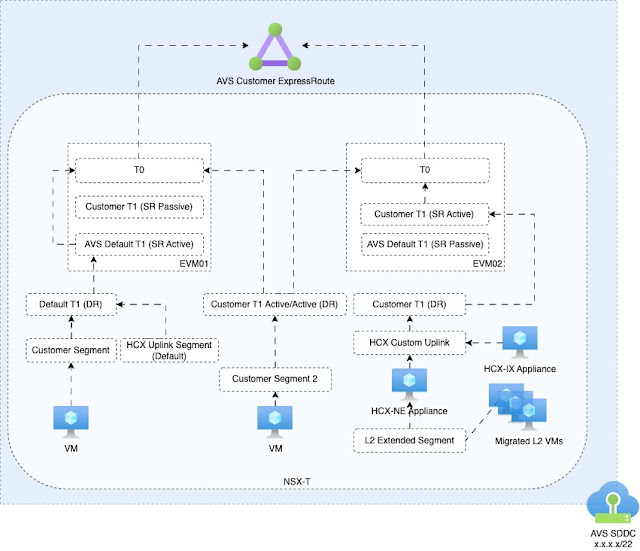

| Example of ways T1's can be deployed along the existing default AVS T1. |

Acronyms:

SR = Service Router

DR = Distributed Router

T0 = Tier-0 Router

T1 = Tier-1 Router

EVM = Edge VM

AVS = Azure VMware Solution

Comments